updates : 2024.04.23

contents : Run the open source code of Llama3 without using huggingface but original model.

Go to the Llama official website and request access of Llama3

https://llama.meta.com/

Meta Llama

Meta Llama is the next generation of our open source large language model, available for free for research and commercial use.

llama.meta.com

click "Download models"

Fill the form and accept all and continue.

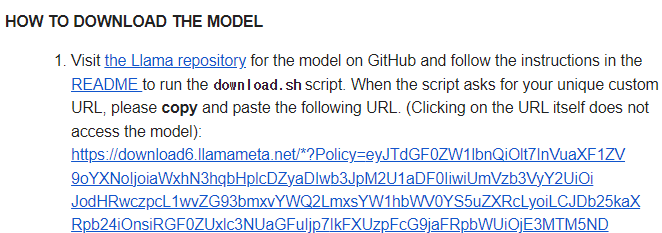

Then, we can get URL code (not using it as URL, it is the key for downloading tokens of the model).

Let's go to Meta's github.

Run Llama3.

https://github.com/meta-llama/llama3

GitHub - meta-llama/llama3: The official Meta Llama 3 GitHub site

The official Meta Llama 3 GitHub site. Contribute to meta-llama/llama3 development by creating an account on GitHub.

github.com

# CLI Command (terminal)

git clone https://github.com/meta-llama/llama3

cd llama3

pip install -e .

bash download.sh

# put the URL code after the comment said put on it!

# after download, run it

# example

torchrun --nproc_per_node 1 example_chat_completion.py \

--ckpt_dir llama-2-7b-chat/ \

--tokenizer_path tokenizer.model \

--max_seq_len 512 --max_batch_size 6'Programming > AI, LLM' 카테고리의 다른 글

| [Llama] 라마3 다운로드 및 실행 방법 (Kor) (0) | 2024.04.27 |

|---|